Convex Optimization 2026, 17 - Saddle-Point Interpretation and Optimality Conditions

A symmetrical formulation for the primal and dual optimization problem. Assuming there are no equality constraints, extend the result by noting

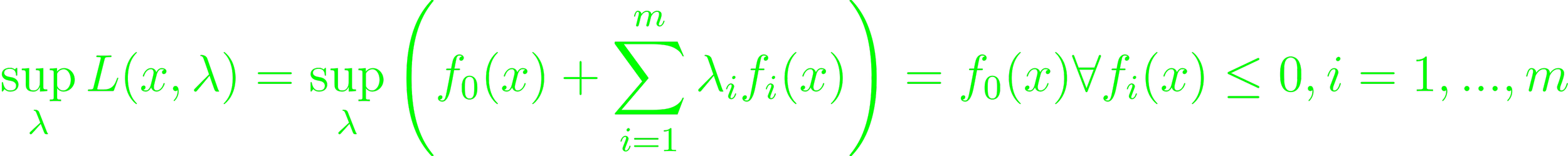

The sup is infinity everywhere else (p. 237). The same is true, if x is not feasible. If fi(x) ≤ 0, the optimal choice sets λ = 0, so sup L = f0. The optimal value for the primal problem then is infx supλ L(x, λ); and the dual function as supλ infx L(x, λ). The weak duality sees supλ infx L(x, λ) ≤ infx supλ L(x, λ) (Eq. 5.45). The strong duality occurs if the previous statement is an equality. Then, the order of the minimization over x and maximization over λ can be switched without affecting the result. Additionally, Eq. 5.45 doesn't depend on properties of L, so equivalent statements can be made about f, which resolves in the max-min inequality. At equality, this yields the saddle-point property (5.4.1). A pair w' ∈ W, z' ∈ Z is a saddle-point of f if f(w', z') ≤ f(w', z') ≤ f(w, z') ∀ w ∈ W, z ∈ Z (5.4.2).

Given a dual feasible (λ, ν), there is a lower bound on the optimal value of the primal problem p' ≥ g(λ, ν). Dual feasible points are then a certificate of the existence of that lower bound. Strong dualities imply these certificates to be arbitrarily good. Dual feasible points bound how suboptimal it is without knowing p' explicitly. The gap between primal and dual objectives is the duality gap (p. 241). In optimization algorithms, these can be used for heuristic stopping criteria (5.5.1). If primal and dual optimal values are equal, a primal optimal x* and (λ*, ν*) a dual optimal point, then f0(x*) = g(λ*, ν*) = infx (f0(x) + ∑λ*ifi(x) ∑νj* hi(x)) ≤ f0(x*). Obviously, equality holds (5.5.2).

Differentiable functions fi, hj; i ≤ m, j ≤ p, along with primal and dual optimal points with zero duality gap, x* minimizes L(x, λ*, ν*) over x, and its gradient vanishes at x*. From here emerges the Karush-Kuhn-Tucker (KKT) conditions for nonconvex problems:

- fi(x*) ≤ 0

- hi(x*) = 0

- λi* ≥ 0

- λi*fi(x*) = 0

- ∇f0(x*) + ∑λi*∇fi(x*) + ∑νj*∇hj(x*) = 0

For convex problems, these are are essentially the same. In convex optimization problems with differentiable objective and constraint functions satisfies Slater's condition, then they are necessary and sufficient for optimality. The KKT conditions can then be used as a stand-in for the objective (5.5.3).

Strong duality and optimal point is known, then minimizer of L is unique. If the problem's solution is primal feasible, it must be primal optimal, and if it's not, no primal optimal point exists. This is can be a analytically solvable condition.